It is expensive and hard to integrate and maintain such systems. Without Data Factory, enterprises must build custom data movement components or write custom services to integrate these data sources and processing. Then move the data as-needed to a centralized location for subsequent processing. These sources include SaaS services, file shares, FTP, and web services. The first step in building an information production system is to connect to all the required sources of data and processing. The pipelines (data-driven workflows) in Azure Data Factory typically perform the following three steps:Įnterprises have data of various types that are located in disparate sources.

You can also run a workflow just one time. For example, a pipeline might read input data, process data, and produce output data once a day. The transformations process data by using compute services rather than by adding derived columns, counting the number of rows, sorting data, and so on.Ĭurrently, in Azure Data Factory, the data that workflows consume and produce is time-sliced data (hourly, daily, weekly, and so on).

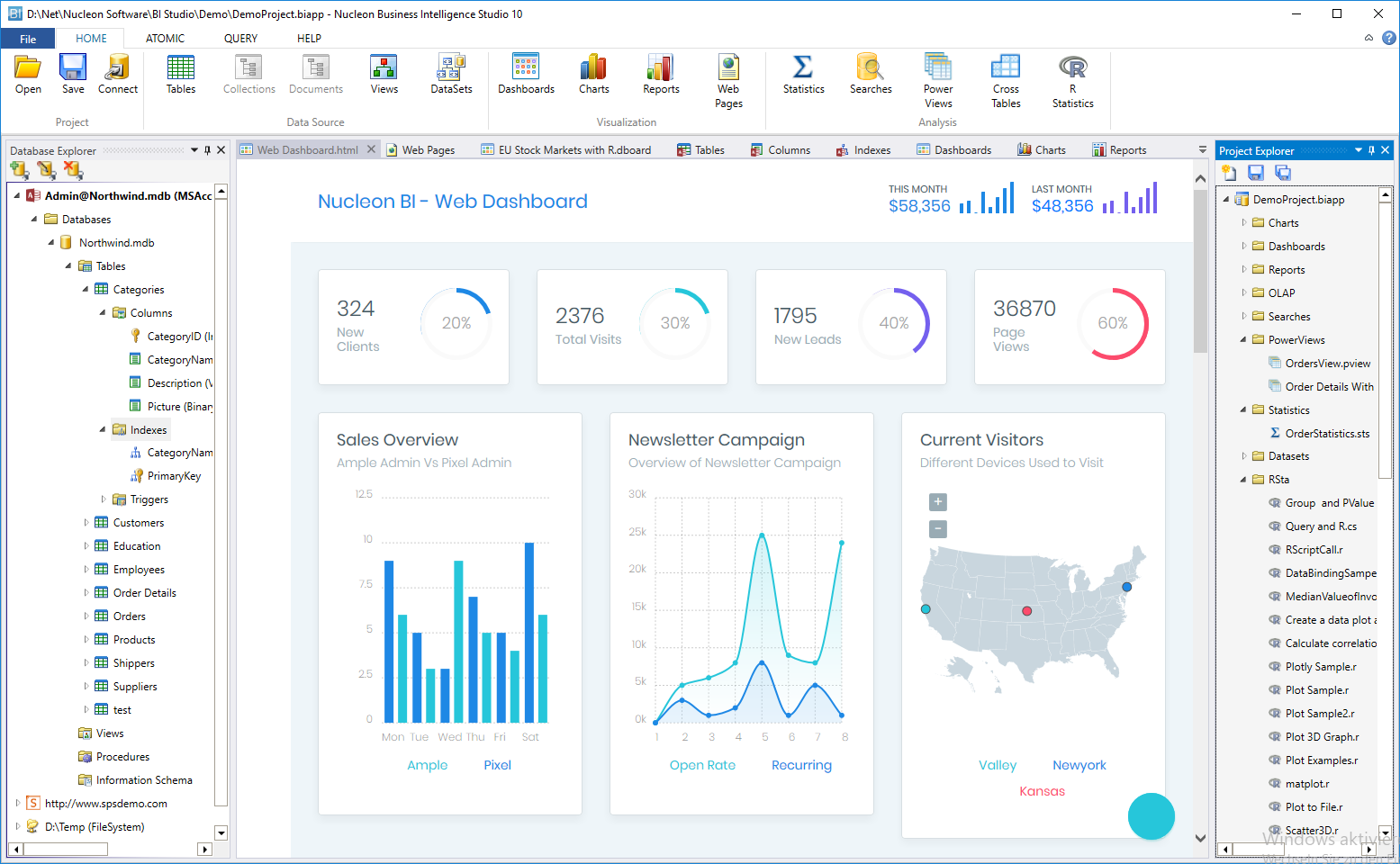

It's more of an Extract-and-Load (EL) and Transform-and-Load (TL) platform rather than a traditional Extract-Transform-and-Load (ETL) platform. Publish output data to data stores such as Azure Synapse Analytics for business intelligence (BI) applications to consume. Process or transform the data by using compute services such as Azure HDInsight Hadoop, Spark, Azure Data Lake Analytics, and Azure Machine Learning. Using Azure Data Factory, you can do the following tasks:Ĭreate and schedule data-driven workflows (called pipelines) that can ingest data from disparate data stores. It is a cloud-based data integration service that allows you to create data-driven workflows in the cloud that orchestrate and automate data movement and data transformation. The company also needs to be able to transform or process data by using existing compute services such as Hadoop, and publish the results to an on-premises or cloud data store for BI applications to consume.Īzure Data Factory is the platform for these kinds of scenarios. The company needs a platform where they can create a workflow that can ingest data from both on-premises and cloud data stores. The company wants this workflow to run once a week. They want to publish the result data into a cloud data warehouse such as Azure Synapse Analytics or an on-premises data store such as SQL Server. Next they want to process the data by using Hadoop in the cloud (Azure HDInsight). Therefore, the company wants to ingest log data from the cloud data store and reference data from the on-premises data store. To analyze these logs, the company needs to use the reference data such as customer information, game information, and marketing campaign information that is in an on-premises data store. The company also wants to identify up-sell and cross-sell opportunities, develop compelling new features to drive business growth, and provide a better experience to customers. It wants to analyze these logs to gain insights into customer preferences, demographics, usage behavior, and so on. In the world of big data, how is existing data leveraged in business? Is it possible to enrich data that's generated in the cloud by using reference data from on-premises data sources or other disparate data sources?įor example, a gaming company collects logs that are produced by games in the cloud. If you are using the current version of the Data Factory service, see Introduction to Data Factory V2.

This article applies to version 1 of Azure Data Factory.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed